|

Scrape Amazon: How To Pull Rates, Asin, Product Names, Etc Do not be intimidated by the complex services listed there. If you scrape a top 100 product under one group just a few times, you might not require it. You might happily get the information with no troubles, and you have a range of tools to choose from. Take WATCH as an example, below is a sample of scratched data. On a sheet, you can see the item name, sponsored https://medium.com/@paleriqjra/how-does-huge-information-aid-boost-market-price-points-de81e750f718 state, ASIN, score, current rate, and so on.

Google-Hosted Malvertising Leads To Fake Keepass Site That ... - SlashdotGoogle-Hosted Malvertising Leads To Fake Keepass Site That .... Posted: Thu, 19 Oct 2023 18:40:00 GMT [source]

What Information Could You Jump On AmazonKeep in mind that these web links may be family member, so you'll wish to make use of the urljoin approach to convert them to absolute Links. Python is the core shows language for web scratching. If not, head over to python.org to download and install and install the current version of Python. With rather basic functions, these choices are fit for laid-back scratching or small companies looking for info in straightforward framework and small amounts. If you are pleased with the top quality of the dataset example, we complete the data collection and send you the outcome. We https://www.4shared.com/s/fplRGNLQtjq configure, release and preserve work in our cloud to essence data with best quality.

Check out the transformative power of internet scratching in the financing field.This is one of the most typical method among scrapes that track products on their own or as a service.You can do some standard mathematics while making the algorithm.

Set up the 'Read information from Google Sheet' action - with your sheet. Copies human interaction to avoid scratching alarms. Pick a device that fits your requirements and create an account if required. Reviews are needed since they can tell you what's excellent and poor about an item that can assist your company.

Locate And Scratch Product CostIt's as simple as factor and click, this video clip shows you exactly how. Go Into URL - Click 'Insert Data' pick 'google-sheet-data' and choose the column with the links in. Spreadsheet - In the area called 'Spread sheet', you can search for the Google Sheet you created. Stores data in cloud services, databases, or other data. You can use the info to understand the marketplace better and take part in the market search. Paste the link in the tool and choose the part you wish to scrape. Allow's take a look at the framework of the item information web page. Executing the code with these changes will reveal the expected HTML with the product information. With Octoparse you can draw out any web sites as you wish without making use of a single line of code.

0 Comments

Exactly How To Scratch Amazon Item Data: A Comprehensive Overview To Ideal Methods & Devices It is very important to make your User-Agent look as possible as possible. Nonetheless, to reach the item info, you will start with item listing or classification pages. Thus far, we have explored just how to scrape product information.

Data scratching is a way to obtain details from web sites automatically.There is a page limitation (500/month) for the cost-free plan with Information Miner.Numbers can after that be shown in graphes according to the removed information.With instead standard features, these options are fit for informal scraping or small businesses in need of information in straightforward framework and small amounts.All your crawlers live on your computer and procedure information in your internet internet browser.The process can appear complicated, however you can do it with the right devices and an in-depth guide.

Because case, there are thousands of free crawling, scraping, and parsing manuscripts offered in setting languages like Python, NodeJS, Scrapy, Java, PHP, and Ruby. These alternatives share a lot of the same functions, but Python appears to have the most extensive layouts for internet scuffing. For tech-savvy users that relish a challenge, coding a personalized scraper uses control and customization. Each of these components can be selected using their respective CSS selectors and afterwards drawn out making use of techniques similar to the previous steps. Scraper Parsers is a browser extension tool to extract unstructured data and imagine it without code. Check out the post right here

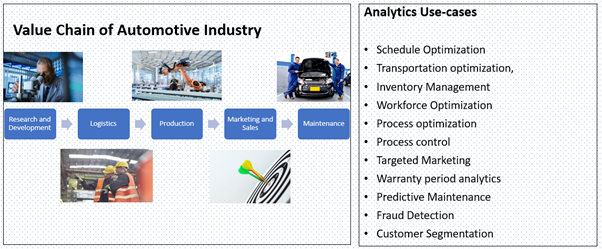

Step 4-- Determine PaginationThe exact same has happened to sellers who are now establishing stores and operating online at Walmart, Flipkart, eBay, Alibaba, etc. However, to obtain a customer's focus and transform them right into a consumer, e-commerce sellers need to make use of information analytics to optimize their offerings. Currently, the process of scuffing item reviews can be more complex, seeing as one item can have numerous reviews. Not to mention, a single testimonial might include a great deal of information that you could want to capture. There is no limit for data scraped despite having a complimentary plan as long as you maintain your information https://e5nkp9.webwave.dev within 10,000 rows per job. If you update to any kind of paid plans, you can appreciate a lot more effective functions such as cloud web servers, set up automatic scraping, IP rotation, CAPTCHA resolving, and so on.A Step-by-Step Guide to Real-Time Pricing - HBR.org DailyA Step-by-Step Guide to Real-Time Pricing. Posted: Fri, 20 Oct 2023 08:54:15 GMT [source]

Scraping Amazon Best Sellers ListMake sure your fingerprint criteria are consistent, or choose Web Unblocker-- an AI-powered proxy service with vibrant fingerprinting capability. We can review the href characteristic of this selector and run a loophole. You would certainly require to make More help use of the urljoin technique to parse these links. Then we sample the data and send it to you for testimonial. Product versions correspond the patterns we have actually described over and are likewise offered on the website in various means. And instead of being rated on one version of an item, scores and testimonials are frequently rolled up and represented by all available ranges.

The Future Of Web Scratching Facebook In 2023: An Extensive Guide There are a number of software application applications available for display scratching, such as UiPath, Jacada, and Macro Scheduler. These tools make it possible for users to draw out information from aesthetic user interfaces, making it an efficient service for maintaining information and enhancing data migration procedures. There are lots of engaging academic and company utilize situations that highlight the significance of collecting and evaluating public web information. For instance, leading companies use this technology to gather details on the state of markets, rival intelligence such as rates and stock degrees, and consumer view. Stay ahead of the contour and reveal the power of web data extraction. In today's data-driven globe, companies that accept web information extraction and unlock insights from that data are ahead of the game. Always bear in mind legal and ethical considerations when scratching web sites. Keep in mind that when participating in internet scratching jobs, it's vital to respect website regards to solution and legal guidelines.

An Introduction To Internet Scratching And Alternative InformationIn the future, business will count much more on internet scratching services and devices to have fresh and ready-to-use information, in order to perform a reliable danger evaluation. Nonetheless, scuffing these web sites is ending up being progressively difficult, as many social networks sites are now calling for logins to access their data, making it harder for scrapes to gather the desired info. Ecommerce internet sites are rather leading with more innovative anti-scraping measures. Over the past couple of years, information scuffing has become a significantly popular way to get information from a website and https://papaly.com/0/dtK9 input it into a new spread sheet. Today, practically every information scrape take advantage of this strategy to collect as much data as feasible for discussion, processing, or evaluation. Web scratching refers to the procedure of drawing out important data from internet sites.Turning breakthroughs into lasting solutions- Princeton Engineering - Engineering at Princeton UniversityTurning breakthroughs into lasting solutions- Princeton Engineering. Posted: Thu, 19 Oct 2023 20:05:28 GMT [source] Unlock Development For Your Service With Our Ai-powered Information Removal SystemYou may utilize data scratching to establish the rate of your goods and the number of possible consumers. This type of analysis has always been the best use of information scratching by specialists. Furthermore, it offers firms a competitive advantage by allowing them to act promptly in action to modifications in their competitors' prices strategies and make data-driven decisions.

Bright Information-- Bright Information is a leading information extractor with a simple interface.Information drawn out or scratched can be utilized meaningfully in company techniques and expanding an organization from rock bottom to the top.Moreover, according to Tomas, generative AI is increasingly commonly used in company usage situations.Also called display scrapers, they can be utilized for apps to extract data from older apps doing not have export functions right into more recent applications on a platter.The primary advantage of automated web scuffing is that it permits you to collect data a lot more quickly, efficiently, and thoroughly than you 'd have the ability to complete by hand.

So they build firewalls around the info and safeguard this details from being damaged. No matter the security, information scuffing removes what it wants and makes use of as it pleases. However it can be likened to stealing because the majority of business that put out data on their web sites are egocentric with the information. It's there however not for public intake and they wouldn't want any unapproved individual having access to it or sharing it indiscriminately. Data Scraping used to Website link be one technique that was generally released as a last option when other choices for data exchange between two programs or systems had failed.

❌ Power-ups Proceeded With Anti-scraping ProtectionsAnd the excellent component of this is that the procedure you've established will certainly continue to feed your spreadsheet with the very same data from the certain website anytime it's upgraded. As we look ahead to 2023, a number of essential patterns are readied to shape the future of Facebook web scratching. A proxy web server acts as an intermediator, avoiding direct interaction between the device using the scraper and the webserver. Any demand made by the device or response from the website goes to the proxy first, concealing the tool's genuine IP and location. Leading factors for the failure of startups are insufficient market research, poor company Click here strategies, and inadequate marketing.

What Is Data Scratching? A Novices Lead Education Ug Pg Programs For Specialists, On The Internet Level Courses Apify David BartonHowever, one distinction the current progress in AI made was a various baseline for the upcoming internet scratching startups. New internet scraping services no more need to begin with ground no and can begin their solutions with AI in mind. Certainly, after this year, you can additionally expect a lot of abuse of the term, so remain SEO-vigilant on the web. As the concern of data privacy becomes significantly pushing, sites, on the various other hand, will continue applying more stringent procedures to protect against web scuffing. This could include using internet browser fingerprinting, IP blocking, putting information behind logins, and more durable safety steps to stop unapproved access to their information. State organizations have additionally started openly recognizing the worth of automated internet information collection.

Lots of other websites are additionally in the business of utilizing robots to scuff other websites for evaluations.Scrapers additionally provide the impact of genuine web traffic, which disrupts the precision of web analytics.Python also leads significantly in Google, which leads us to think Python is the number one selection in discovery and web scratching novice search questions.A proxy server works as a liaison, protecting against direct interaction in between the gadget using the scraper and the webserver.

Its combination with numerous applications using APIs and webhooks comes as a catch below. Visualize scraping data manually or needing to remind the data scratching software application to simply do its work. PWC enjoys automation with both hands and feet and also confirms that intelligent automation will recover shed effectiveness soon. Information scuffing is done using composed codes or computer system programs in the form of scraper bots. They use APIs or other User Interfaces that permit both technical and non-technical individuals to scuff information easily. While they may not be as adjustable as self-built scrapers, pre-built scrapes are practical and call for marginal technical proficiency, making them a popular option for several users. Huge websites normally make use of protective formulas to protect their data from web scrapers and to limit the variety of demands an IP or IP network may send. This has created a recurring battle between website designers and scuffing programmers. Thus, the key element that differentiates data scuffing from routine parsing is that the outcome being scratched is planned for display screen to an end-user, instead of as an input to another program. It is for that reason generally neither documented neither structured for practical parsing.

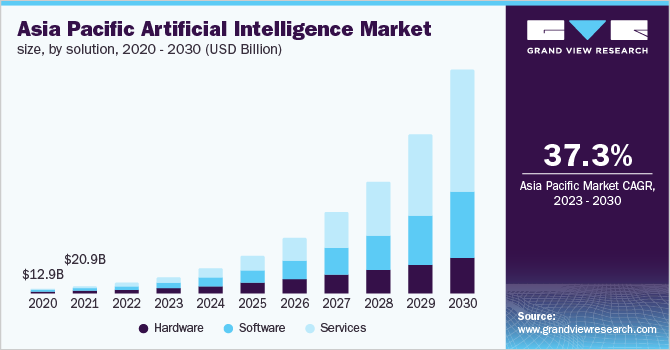

Why Python Is The Go-to Language For Internet ScrapingWhile it holds true that internet scraping usually makes it right into news feeds due to lawful fights, in 2022, there were numerous various reasons the term made it into mainstream media. Playwright is a newer collection that has gained popularity for its contemporary features, cross-browser support, and simplicity of usage in multiple languages. Well, 2022 has shown us that not that much and every little thing at the very same time. Allow's dive into what web scraping resembled in 2022 and exactly how it may establish in 2023. Each technique has its own collection of tools and applications, making information scraping a flexible remedy for data gathering and evaluation. In this write-up, we will certainly go over the top 10 scratching tools in 2023, their attributes, and how to pick the best one for your needs. We will also delve into the future of internet scraping and the trends that are shaping the sector. So, whether you are a skilled web scrape or just starting, this write-up will offer you with valuable insights and assist you make educated choices when picking the very best scratching tool for your needs. Over the last few years, web scuffing and different data have actually come to be progressively preferred among organizations and people alike. These data sources supply a wide range of information that can be used to obtain insights, make informed decisions, and stay ahead of the competitors. As a matter of fact, only 25% of executives state their business have actually developed a data-driven organization, according to the Harvard https://sgp1.vultrobjects.com/ETL-Processes/Web-Scraping-Services/api-integration-services/the-very-best-web-scuffing-tools-for.html Service Review. And, just 20.6% of execs report developing a data culture within their organizations. Usage BeautifulSoup or Scrapy to analyze the HTML and draw out the preferred data. Ultimately, legal factors to consider and guidelines can decrease the sector. The world is approximated to create, eat, and store concerning 150 trillion gigabytes of data.Why Operate Require Data Scratching?Information scratching is a technique where a computer program removes information from human-readable result originating from an additional program. With improvements like ChatGPT, incorporating Artificial Intelligence into internet scraping has actually become extra easily accessible. Even normal designers can now utilize AI in their scratching processes. Information science is a combination of-- to name a few points-- maths, shows, and stats to study data and determine fads. The globe today is data-driven, and the future of information scientific research is growing. Even when you account for the Planet's whole populace, the average person is anticipated to generate 1.7 megabytes of data per 2nd by the end of 2020, according to shadow supplier Domo. Consequently, the data extraction space, generally, and internet scratching, specifically, is expected to become a significantly complex domain, calling for ever before raising levels of specialized understanding and know-how. Get in touch with us today to read more about just how we can help you navigate anti-scraping measures and remove web information with self-confidence. Additionally, firms have to stand durable information recognition abilities to guarantee rigorous information top quality assurances that meet the specific specs of business. To stay in advance of the curve, it is necessary to comprehend and do something about it on the most recent trends and forecasts in the ever-evolving room of web data extraction and large data.Clearview AI and the end of privacy, with author Kashmir Hill - The VergeClearview AI and the end of privacy, with author Kashmir Hill.

Posted: Tue, 17 Oct 2023 14:00:00 GMT [source]

Obstacles Of Data Scuffing In The FutureProvided the bright future of data scuffing, it is the right time to enroll in an information scientific research course, get more insight into data scratching, and make a lucrative income. Web scuffing is the procedure of removing data from a web site using crawlers and scrapes. It involves sending out a request to an internet site, analyzing the HTML content, and removing the desired data.

Web Scratching Solutions: The Ultimate Overview To Picking The Best Tool For You Isn't it real that every company wishes to know how much their brand name is worth and how to maintain it growing? Information scraping solutions can draw pertinent data from a selection of resources to aid you track, measure, and check your development in time. It also allows you to compare your firm to your rivals, assess tweets and article, and supply actionable insights. The reality that real estate sales and jobs have turned digital in the last two decades puts the standard firm at risk of collapse.

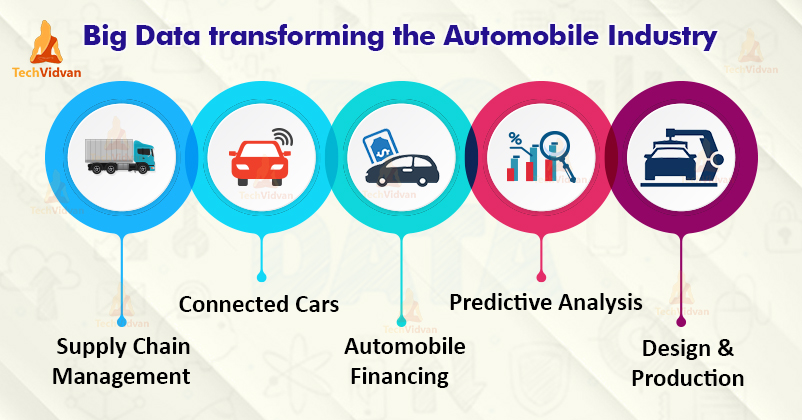

As the electronic economic climate broadens, the role of internet scraping comes to be ever before more important.A brand name monitoring practice done by business to understand exactly how consumers perceive their brand name, monitor their competitors, and forecast the market's efficiency.And if you have some sophisticated scraper after that you ought to be having JSON and this can be also made use of for API.Web scraping crawlers basically do specifically that, only on a larger range.With this rise, information analytics has actually ended up being a widely vital part of the way organizations are run.

On the various other hand, picking between common proxies and dedicated proxies is a matter of point of view and exactly how large Automated ETL Processes your project is. A lot of consideration enters into selecting either a specialized or shared proxy; it ranges from your internet scraping project size, budget, and the desired performance. In most cases, if your job is not so huge and performance is not an issue, then you can opt-in for a shared proxy where you pay for accessibility to a swimming pool of IPs. When the job is a huge one, and you are very keen on performance, you should opt-in for a dedicated proxy. Whatever the instance, always avoid public proxies or open proxies as they are of low quality and can position a great deal of risk to your system.

What Exactly Does An Internet Scratching Infrastructure Do And Just How Does It Assist You?Internet scraping, which immediately gathers all the data on the net, is utilized in numerous areas today. With web scratching, you can quickly contrast rates and collect important data from your competitors' internet sites, which can be important for making educated company choices. If you utilize internet scuffing to obtain information that is easily accessible online, it is legal. Be cautious while scraping personal information, copyright, or personal information considering that some kinds of information are safeguarded by worldwide laws. To design honest scrapes, respect your target sites, and demonstrate compassion. Just real-time surveillance of the rates of the products marketed by your competitors on their internet sites makes this practical. The business can then use it as an overview for their item enhancement or style. Scuffing in this instance will serve not just for firms that construct their pricing plan based on competitors. Yet additionally for those who utilize online purchasing services, keep track of product costs, and seek things in a number of stores at once. Scuffing tools help to collect and arrange mailing addresses, contact information from sites and socials media. In this way, it https://just-tomato-gcsfg8.mystrikingly.com/ is feasible to compile lists of calls and all the info for a business.# 3 Take Into Consideration The Costs And Budget PlanYou additionally require to comply with excellent internet scuffing practices, such as limiting the frequency and volume of your requests, using proxies or VPNs, and recognizing yourself with a user-agent. HTML specifies the framework and material of a website, while CSS specifies the design and appearance. To scuff data from a website, you require to comprehend exactly how HTML and CSS work and exactly how to locate the components you intend to extract. You require to recognize how to utilize HTML tags, features, and selectors, along with CSS residential or commercial properties, values, and pseudo-classes. You likewise need to understand how to utilize devices such as internet browser programmer devices, XPath, and CSS selectors to examine and query websites. Internet scraping as a service indicates that you outsource your internet scratching to a company or vendor that already has the infrastructure (e.g. robot permit, internet browser expansions, IT employees).What Is Web Scraping? - Mapsofindia.comWhat Is Web Scraping?.

Posted: Wed, 04 Oct 2023 09:45:04 GMT [source]

Data Assimilation Guide To Scalable Data Additionally, information may have various frameworks as well as schemas, better making complex the combination procedure. To address this obstacle, companies can take advantage of data integration tools that sustain a vast array of data formats and also give built-in data makeover abilities. These devices can instantly transform information from one format to one more, making it simpler to integrate as well as evaluate. Any kind of third-generation system will certainly make use of statistics and also machine learning to make automatic or semi-automatic curation choices. Certainly, it will use sophisticated techniques such as T-tests, regression, predictive modeling, information clustering, and also category. Much of these techniques will certainly entail training information to set inner specifications.

The Imperative of Data Management for Banks - Banking ExchangeThe Imperative of Data Management for Banks.

Posted: Thu, 27 Jul 2023 01:07:40 GMT [source] Spatialapi' 20: Procedures Of The Second Acm Sigspatial International Workshop On Geospatial Data Accessibility And Handling ApisDeploying your pipe suggests moving it from your advancement or testing environment to your production setting, where it will operate on a routine basis. You need to adhere to the very best techniques for release, such as making use of version control, automation, paperwork, as well as back-up. Checking your pipe indicates keeping track of its efficiency, condition, as well as health, as well as any type of abnormalities or failings that may take place. You require to utilize tracking devices and metrics, such as dashboards, alerts, logs, or reports, to guarantee that your pipe is running smoothly and successfully. Evaluating as well as debugging are critical for guaranteeing that your pipeline works as anticipated which your data quality is preserved. You need to carry out different kinds of tests, such as device examinations, combination examinations, performance tests, and also end-to-end tests, to confirm that your pipe can manage different situations and scenarios.

Prevalent use information analytics can assist groups make smart decisions quickly and also with more accuracy than ever before, while removing blockers that hamper partnership.One more obstacle is the intricacy of integrating diverse information formats and structures.Information Movement service integrates modern technology with best methods to keep all your continuous data migrations on the right track, on time, and also on spending plan.The Databricks Lakehouse Platform is ideally fit to handle huge amounts of streaming data.

Our Information Migration is an instinctive, secure and quickly deployable solution that enables organizations to seamlessly move huge volumes of data, while providing functional dexterity and also price financial savings. Scalable data integration strategies also play a critical duty in ensuring information high quality as well as uniformity. Information quality concerns, such as duplicates, disparities, and errors, can significantly influence the reliability and usefulness of data.

A Scalable Information Combination And Also Analysis Design For Sensing Unit Data Of Pediatric AsthmaPick your preferred information combination engine in AWS Glue to sustain your users and workloads. Updating its information architecture allowed Flagstar Financial institution to provide faster as well as much more precise information to its customers via their personalized options. AWS Glue Studio Job Notebooks give serverless notebooks with minimal setup in AWS Glue Workshop, so programmers can get started quickly. With AWS Glue Studio Job Notebooks, you have access to a built-in user interface for AWS Glue Interactive Procedure where you can save and also schedule your note pad code as AWS Glue jobs.Surveillance driving demand for storage solutions - FutureIoTSurveillance driving demand for storage solutions.

Posted: Fri, 18 Aug 2023 01:03:35 GMT [source] Venture IotAfter that, learn exactly how to organize information as component of the application of DataOps utilizing IBM Cloud Pak ® for Information; established IBM Cloud Pak for Information on Red Hat ® OpenShift ®; established governance artifacts for the data; as well as even more. Obtain a solitary, relied on, 360-degree sight of data as well as enable users to https://sgp1.vultrobjects.com/ETL-Processes/Web-Scraping-Services/api-integrations/discover-exactly-how-the-travel-market-benefits-from-information58251.html know their data. Brochure, safeguard as well as regulate all data kinds, trace data lineage and handle information lakes. Highlighting consumers and partners who have changed their companies with SnapLogic.

Scalable Information Makeover Strategies For Efficient Etl Processes Using this ETL advertising tool, businesses can develop an easy to use interface amongst numerous parts of their organization from vendors to personnel to clients. Improvado sustains information assimilation with over 500 systems, including advertising networks, repayment cpus, CRM systems, marketing automation tools, and social networks systems. Another difficulty is the intricacy of integrating diverse information formats and also structures. Conventional approaches require extensive coding as well as hands-on mapping to change information right into a standardized format that can be easily incorporated. This not only calls for significant effort and time yet likewise enhances the threat of mistakes or inconsistencies in the integrated dataset. These tools are instrumental in enabling companies to stay clear of information silos, enhance data high quality, as well as save a great deal of time on reporting via automated data pipelines. It offers an abundant library of improvement functions, allowing users to clean, filter, aggregate, and also control data according to their demands. The platform totally supports complicated changes, enabling customers to join numerous datasets as well as use customized business reasoning. With PowerCenter, you can finish your ETL needs in one place, consisting of analytics, information stockroom, as well as data lake remedies. These devices extract data from a range of sources making use of batch processing. Because the technique makes use of minimal resources efficiently, it is cost-effective. This has resulted in the introduction of scalable data change methods that can take care of the ever-increasing data quantities successfully. To resolve this challenge, scalable information transformation techniques have actually emerged as a service to boost the effectiveness as well as efficiency of ETL processes. These methods utilize the power of distributed computing as well as parallel processing to manage large volumes of data in a prompt way. By breaking down the information change jobs right into smaller sized, much more workable pieces, these techniques allow organizations to process data in parallel, dramatically minimizing the total handling time. Fivetran is a cloud-based ELT data combination system that uses an easy, dependable method to reproduce and synchronize data into your cloud data storehouse. It allows you set up "connections" in between your information resources-- mainly SaaS applications, cloud services, and also cloud databases-- as well as your cloud information storehouse.

Fivetran - Quick Etl With Totally Handled ConnectorsImprovado is a sophisticated advertising and marketing analytics solution powered by an enterprise-grade ETL. By prioritizing protection procedures, companies can preserve the trust and personal privacy of their information throughout the ETL procedure. CloudZero is the only option that allows you to designate 100% of your invest in hrs-- so you can align everyone around expense dimensions that matter to your organization. You can establish where to cut expenses or spend more to make greater returns. For instance, you can inform which customer's membership contract to examine at renewal to secure your margins. Or, determine the most successful consumer sectors, then target them even more to improve revenues. And if you don't have your very own data storehouse or knowledge to take care of one, Improvado uses information storage facility administration services. As soon as all the different data sources are offered on screen, they can be linked with each other to form a data source schema. This is then utilized by the administrator to gain helpful insights right into several service components consisting of sales efficiency and organization quality. Parabola is among the most interactive ETL advertising devices available on the market website today.Top 10 Advanced Data Science SQL Interview Questions You Must ... - KDnuggetsTop 10 Advanced Data Science SQL Interview Questions You Must ....

Posted: Fri, 27 Jan 2023 08:00:00 GMT [source]

Top 6 Tools To Improve Your Performance In SnowWith standard on-premise remedies, you would certainly need to invest in expensive software and hardware licenses to deal with raising information quantities. On the other hand, cloud-based ETL services provide a pay-as-you-go design where you only spend for the sources you utilize. This removes upfront prices and permits you to scale your operations up or down as required without any additional investments. Scalable as well as identical handling techniques significantly boost performance in ETL styles. By dispersing data handling jobs throughout readily available sources, organizations can accomplish faster handling as well as successfully take care of growing data volumes. Apigee Assimilation API-first integration to link existing data as well as applications. Cloud SQL Relational database service for MySQL, PostgreSQL and also SQL Server. Advertising And Marketing Analytics Solutions for accumulating, analyzing, and also activating consumer information. Performance and Partnership Change the method groups deal with remedies developed for human beings and developed for impact. Virtual Desktops Remote job options for desktops and also applications (VDI & DaaS). Active Help Automatic cloud resource optimization as well as raised protection.

" EL" devices like Fivetran as well as Matillion that simplify moving data from numerous sources into your cloud data stockroom.With AWS Glue, you can effortlessly scale your ETL refines based on your requirements, making certain optimal efficiency and cost-effectiveness.IoT devices can consist of factory equipment, network web servers, smartphones, or a broad variety of other makers-- even wearables as well as dental implanted gadgets.

They allow companies to extract, change, and tons information from diverse resources into target systems efficiently. Distributed handling structures, parallelization methods, efficient information storage, and fault tolerance steps are key factors to consider for scalability. Finally, companies must take into consideration automating their information transformation refines to make certain scalability and also repeatability. By utilizing process monitoring devices or ETL frameworks, organizations can automate the execution of information transformation jobs, consequently reducing manual effort as well as making sure constant and trustworthy results. The modern ETL devices are created to streamline the ETL procedure, decrease mistakes, and also boost the general effectiveness of information assimilation and analytics operations. ETL pipelines have actually been a vital part of information integration for many years. As the amount of data expanded and also the kinds Comparison of custom ETL tools and platforms of data sources came to be a lot more complex, it became clear that even more versatile and easy-to-use ETL remedies were required. This brought about the advancement of modern ETL devices created to deal with these new challenges. In yet one more study, a financial services business was having problem with the raising complexity of their ETL procedures.

Top 7 Amazon Scuffing Tools In 2023 One last point we might scratch from a product web page is its testimonials. An additional note is that if you send as many headers as possible, you will certainly not require Javascript providing. If you require rendering, you will certainly need tools like Dramatist or Selenium. If you require to scratch more, professional and other paid strategies are available. After you have actually gone into all the key words you desire, click on the "Beginning" base to launch the scrape. When the run is completed, you can export the drawn out data to diverse kinds of styles like Excel, CSV, JSON, and even data sources like Google Sheets for more use.

# Established The 'Delete Rows From A Google Sheet' StepInformation A - Click 'Select information' and then 'google-sheet-data' the web page link. Last cell - Enter 'A1' the robot will now only pass a single row of information. If you have greater than one column of information transform the worth for example get in 'AD1' to consist of 4 columns. Establish the 'Jump to one more action' step, and established max cycles to 1 symphonious 6.New York Seeks To Limit Social Media's Grip On Children's Attention - SlashdotNew York Seeks To Limit Social Media's Grip On Children's Attention.

Posted: Thu, 12 Oct 2023 22:00:00 GMT [source] Exactly What Can You Receive From Amazon Data ScratchingYou can do this by consisting of 'try-catch' expressions that ensure that the code does not stop working at the initial event of a network error or a time-out error. You can do so after extracting the full HTML framework of the target web page. Evaluation information-- Maximize your item growth, management, and client journey by scratching item testimonials for analysis. Web scratching APIs appear to be one of the most expensive solution, yet you must value the value they offer the table.

If you have a smaller-scale scratching project, you can creep each search phrase's category checklist.On the other hand, internet scraping services can take care of a lot of the concerns we've stated.Ansel Barrett If you ask exactly how to scratch a site, you have to be brand-new to internet scratching.Upgrade to the professional so that you can obtain 10,000 rows each day.

|

Archives

December 2023

Categories |

RSS Feed

RSS Feed